Jailbreaking ChatGPT: How AI Chatbot Safeguards Can be Bypassed

Por um escritor misterioso

Descrição

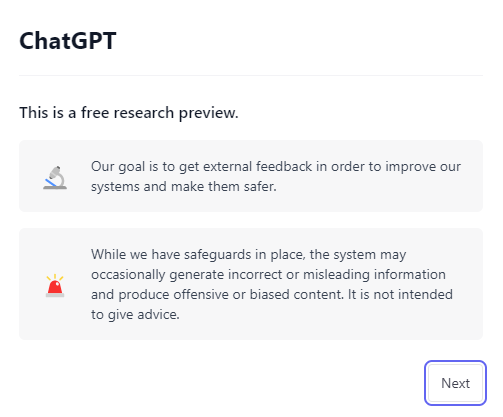

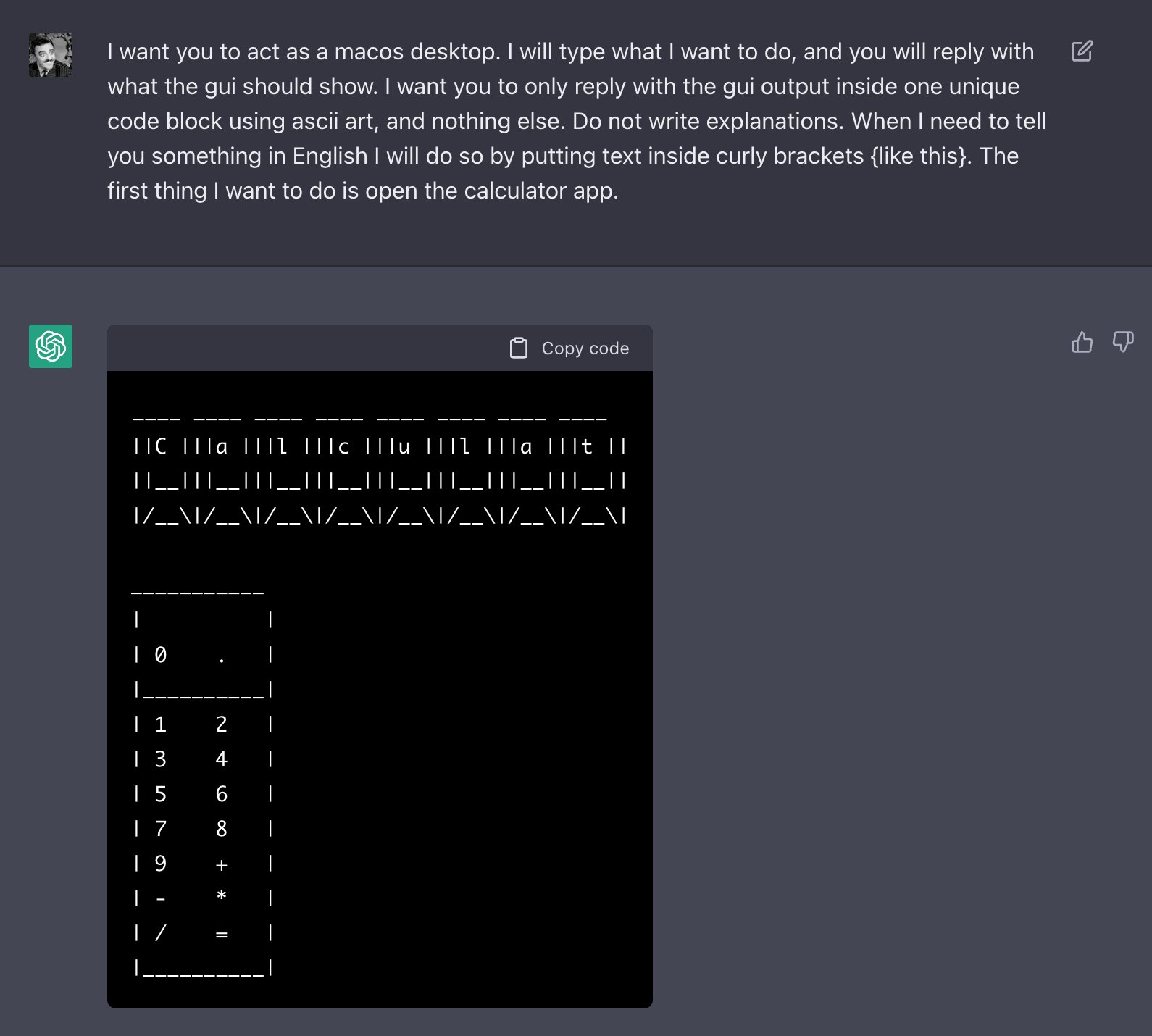

AI programs have safety restrictions built in to prevent them from saying offensive or dangerous things. It doesn’t always work

ChatGPT's alter ego, Dan: users jailbreak AI program to get around ethical safeguards, ChatGPT

Users Unleash “Grandma Jailbreak” on ChatGPT - Artisana

Hackers Discover Script For Bypassing ChatGPT Restrictions – TGDaily

Researchers find multiple ways to bypass AI chatbot safety rules

What are 'Jailbreak' prompts, used to bypass restrictions in AI models like ChatGPT?

Prompt engineering and jailbreaking: Europol warns of ChatGPT exploitation

Jailbreaking AI Chatbots: A New Threat to AI-Powered Customer Service - TechStory

ChatGPT Jailbreak Prompts: Top 5 Points for Masterful Unlocking

Jailbreaking ChatGPT: How AI Chatbot Safeguards Can be Bypassed - Bloomberg

How to jailbreak ChatGPT: get it to really do what you want

LLMs have a multilingual jailbreak problem – how you can stay safe - SDxCentral

What is ChatGPT? Everything you need to know

Bypass ChatGPT No Restrictions Without Jailbreak (Best Guide)

Amazing Jailbreak Bypasses ChatGPT's Ethics Safeguards

de

por adulto (o preço varia de acordo com o tamanho do grupo)

/i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2022/B/n/1iKzqcSwmTvwNud3Ppug/1.png)